Machine learning technology is opening up new strategies to find and prosecute the men who profit from the worst of the illegal sex trade

“Hey there. You available?”

“Yes I am… how long did you want company for?”

“Was hoping for an hour, maybe half. Depends.”

“Half. 125 hour. 200 donation.”

“Gotcha. Can you stay for half hour then?”

“You’re not a cop or affiliated with any law enforcement, right?”

“Ha. No. You?”

In the thrum of texts that flit across cell towers every day, arranging sex from online ads, this was just another exchange. What followed, however, was anything but ordinary.

On June 4, 2015, a 33-year-old woman pulled off a road in Pennsylvania’s Lehigh Valley and knocked on the door of room 209 at the Staybridge Suites. She expected an assignation with the man who had sent the earlier texts. What she got, once she accepted payment, was a sting. The man was a state trooper.

So far, a pretty normal prostitution bust. But then things took a turn. The woman told the troopers that she actually hadn’t been the one texting. The texter was a man she knew as “GB,” and he was waiting for her in a silver Acura out in the hotel parking lot. She also wasn’t the only woman GB was working. There were others, including a teen the woman once saw GB smack to the ground. There were drugs involved, too.

When troopers found GB in the car, he was on his phone posting a sex ad on the classified advertising site Backpage. There was another young woman with him. The troopers arrested him, took the phone, and wrote him up on charges of promoting prostitution and related offenses.

But Lehigh County Deputy District Attorney Robert Schopf saw the potential for something more. After further investigation, Schopf amended the charges to include sex trafficking in individuals and geared up for a hard legal fight. Unlike promoting prostitution — often a misdemeanor in Pennsylvania, and even if it rises to felony, the maximum sentence is seven years — trafficking adults is always a felony, with a penalty of up to 10 years. But for that Schopf would have to prove that GB, aka Cedric Boswell, now 46, of Easton, Pennsylvania, had not only knowingly promoted the women for sex, but had actually led them into it by fraud, force, or coercion. And to do that, Schopf would have to convince a jury that would be thinking, as he put it, “‘Well, wait a minute. She walked out of that hotel room every day. Where are these chains?’”

Boswell’s case seemed no different. A forensics dump of his cell phone produced 6,306 images, mostly of scantily-clad women. It also turned up a promising favicon, or favorite icon: posting.backpage.com, the page on the now-defunct website where sex workers, pimps, and traffickers could place ads for women selling sexual services. At the time, Backpage was the Amazon of the escort industry, the first place people would go to sell sex — and the first place law enforcement would go to track them down. In fact, Backpage was where the Pennsylvania State Troopers had found the original ad that led them to the GB bust.

The phone was full of evidence, but what the police had was circumstantial. The challenge for prosecutors was connecting those photos to actual escort ads. “We just don’t have that amount of time to manually look through the [images on the phone] and then try to compare: Is this the girl that I’m seeing on Backpage?” says Julia Kocis, director of Lehigh County’s Regional Intelligence and Investigation Center (RIIC), a web-based system run by the DA’s office that aggregates local crime records and supports law enforcement. “Where do I even begin to find them?”

But Kocis had an idea. She had heard about a new tool called Traffic Jam that was specifically designed to aid sex trafficking investigations with the help of artificial intelligence. Traffic Jam had been created by Emily Kennedy, and had grown out of her study of machine learning for criminal investigations as a research analyst at the Robotics Institute at Pittsburgh’s Carnegie Mellon University — as well as what Kennedy called “a lot of talking to detectives about their pain points” around sex trafficking cases.

Kocis, who was by then working closely with Schopf on the case, decided to try a free trial of Traffic Jam. “I ran the images and phone number through the tool,” she says, “and it brought back the ads he’d posted in minutes. Then I futzed around with it, and it showed a map of where the phone number was used to post girls at different locations, and over time.”

Kocis couldn’t believe how effective the tool was — and neither could Schopf. “Julia sent it to me and it was just awesome,” he says. “When you have something that critical, [the Traffic Jam evidence] is a smoking gun. I mean, there was absolutely no way for [Boswell] to get out from underneath that evidence.”

The original 33-year-old woman was reluctant to testify, but she agreed after Schopf told her about the corroborating evidence gotten with the help of Traffic Jam. After two hours of deliberation, on April 19, 2016, a jury found Cedric Boswell guilty of several crimes, including trafficking in individuals. He is currently serving a sentence of 13 to 26 years in state prison.

Traffic Jam is part of a cluster of new tech tools bringing machine learning and artificial intelligence to the fight against sex trafficking, a battle that over the nearly 20 years since the Trafficking Victims Protection Act was signed into law has been stuck in a weary stalemate. A.I. is no magic solution to a highly complex problem, but for early adopters it is catalyzing the rate of sex trafficking investigations, allowing one DA’s office to conduct 10 times as many investigations as they used to, while making them 50 to 60% faster. Driven by everyone from Traffic Jam’s Kennedy — whose program has helped crack at least 600 cases — and celebrities like Demi Moore and Ashton Kutcher to the government’s Defense Advanced Research Projects Agency (DARPA) and major corporations like IBM, these machine learning tools work the crime from every angle: finding victims, following money trails, and confronting johns. They can do in seconds what could take investigators months or even years, assuming they ever get to it at all. And the A.I. never takes a coffee break.

A Traffic Jam analysis showed there were 133,000 new sex ads a day on Backpage before it closed. Six months later, that number had risen to 146,000 on leading escort sites.

The technology has arrived in time to help investigators navigate a newly chaotic underworld. After growing pressure from Congress, federal authorities last April shut down Backpage.com, a move they hoped would stymie traffickers posing their victims as willing sex workers. But the closure — which, it should be noted, was opposed by many sex workers who are in the field by choice and who feared it would force them to return to walking the streets — seems to have backfired. According to several new A.I. tools, the commercial sex business is not only more robust than ever, it has now splintered. A Traffic Jam analysis showed there were 133,000 new sex ads a day on Backpage before it closed. Six months later, that number had risen to 146,000 on leading escort sites, taking into account the common practice of smaller sites reposting ads. “Some new sites were getting so much traffic they kept crashing,” says Kennedy. “I think it will continue to spread out, but always evolve.”

The result is that law enforcement, which relied for years on Backpage for leads, now don’t know where to start their investigations. “Traffickers adapt and they learn what you’re doing,” says Nic McKinley, the founder of DeliverFund, a nonprofit that assists with these sex trafficking investigations. “If your technology can’t also learn, then you’ve got a problem. A.I. has to be be part of the solution.”

It increasingly is. In 2014, when Kennedy founded Traffic Jam, Kutcher and Moore launched their sex trafficking investigation tool Spotlight, which is used by more than 8,000 law enforcement officers. That same year DARPA launched a $70 million effort dubbed Memex that funded 17 partners to develop other search and analytic tools to help fight human trafficking. The campaign ended in 2017, but several new machine learning tools came out of the effort that are assisting the National Center for Missing & Exploited Children (NCMEC), the Manhattan DA’s office, and the Homeland Security Investigations (HSI) field office in Boston (at least according to DARPA — HSI doesn’t confirm their investigative tools). “DARPA would send folks up to literally sit next to our analysts and watch as they went step by step through an investigation,” says Assistant District Attorney Carolina Holderness, chief of Manhattan’s human trafficking unit. “Then [they’d] go directly back to the software developers and adjust the tool.”

Holderness’s team tried out two Memex products, but TellFinder, the one they settled on, was created by the nonprofit arm of a Toronto software company called Uncharted that makes tools for law enforcement. David Schroh, who heads up TellFinder’s six-person team, says they were drawn to the project after realizing how little technology was being employed to fight sex trafficking. Similar to Traffic Jam but with a broader scope, TellFinder slaloms through terabytes of the deep web to search where Google can’t. Along the way, its machine learning algorithms match language patterns in similarly written ads and recognize faces. “We make it very easy for someone trying to look for a person to find all the ads she’s been in,” says Chris Dickson, TellFinder’s technical lead.

Even in its pilot stage, TellFinder’s speed proved it could make or break a case. In November 2012, a man named Benjamin Gaston kidnapped a 28-year-old woman who had advertised sex online. He took her wallet and phone, raped her, and forced her to prostitute for him. Desperate after two days of captivity, she tried to escape out the window, only to plummet six stories to the ground. “She survived,” says Holderness, “but broke every bone in her body, just about.”

By the time of the trial in 2014, the woman had made a miraculous recovery. After that fall, she told the jury, she’d never engaged in prostitution. But the defense then showed up with recent sex ads that made it appear that she had willingly gone back to the life. The ads would not only undermine her credibility as a witness, they also offered exculpatory evidence for the defense’s argument that she’d only jumped out of the window because she was emotionally unstable and a drug abuser.

Back at the DA’s office, Holderness’s team put the ads in TellFinder. “We knew right away they were being posted in places where the woman was not located,” she says. “Someone else was using her photograph, using her ad, to get customers for herself, which is a common practice.” The defense backed down, and Gaston got 50 years to life.

“To be able to do that overnight — it actually saved that whole prosecution,” says Holderness.

Law enforcement has failed to put a significant dent in the sex trafficking industry for a number of reasons. Prostitution may be illegal in nearly every part of the U.S. — save a handful of counties in Nevada with regulated brothels — but there are women who engage in sex work of their own volition, and separating them from people who are being trafficked against their will isn’t always easy. This is the rare crime where victims rarely come forward to seek help, and without the victims, it’s hard to hunt down the predators. Nor is it easy to find the women in online ads; traffickers hide them from search engines by inserting emoji between phone number digits, for example, or by forcing the girls to constantly wear wigs and change their appearance. And now, after the Backpage ban, it’s even easier to dodge detection.

This is the kind of data-driven challenge A.I. is built to surmount.

But TellFinder, Spotlight, and Traffic Jam are trained expressly to know these tactics and to get around them; this is the kind of data-driven challenge A.I. is built to surmount. The other major challenge A.I. is equipped to take on is data overload. Piecing together the evidence to prove trafficking — even to recognize it in the first place — is complex and time-consuming. “You’ll have an investigator who starts looking at a case and at some point, a supervisor is saying, ‘We’ve got to wrap; let’s just go with what we’ve got,’” says Bill Woolf, who worked in law enforcement in the Washington D.C. area for 15 years and who just launched the National Human Trafficking Intelligence Center to train those on the frontlines. “I mean sometimes you get a search warrant return from Facebook, and it’s thousands and thousands of pages. Who has time to go through it? These tools can find what you need in seconds. With A.I., you’re going to be much more likely to see the whole picture.”

Holderness remembers one case in particular. Her team received an email from an employee at Covenant House, a New York City shelter for runaway kids. “She told us, ‘Look, one of our staff members found a recruitment ad,’” says Holderness. “‘Is there anything you guys can do with this?’” The ad was posted on Craigslist and read: “Want to stop living in Covenant House shelter? Need Free room & board? Employment & Financial assistance? Room available immediately!” The ad went on to note: “Should have an interest in escort business making $1000+ weekly.” That point was underscored with a photo of clinking bottles of 1800 Silver tequila and Hennessy next to a shot of $100 bills tossed over a leather chair.

Recruiting runaways from a shelter is especially cold-blooded. One out of five homeless girls ends up being sex trafficked, according to Covenant House research done with the University of Pennsylvania and Loyola University New Orleans. But who was behind the ad? Before TellFinder and similar A.I. programs, while police may have been able to track the poster down, they may not have known that he actually trafficked anyone, much less an entire network of young women. When Holderness ran the phone number through TellFinder, “instantly we find a bunch of other ads on multiple sites,” she says. Not only was he recruiting girls online, he was also selling them for prostitution. The DA’s office subpoenaed the sites for all the records relating to the posting of the ad, retrieving phone numbers, email addresses, and other personal data that led them to Michael Lamb, now 37, from Essex, New Jersey. The intel also pointed investigators to his victims. With their testimony, Lamb was sentenced in December 2017 to six to 18 years in prison for sex trafficking and promoting prostitution.

Since adopting TellFinder, Holderness says, “We’ve been able to go from about 30 human trafficking investigations a year to 300.” The office has pledged $4 million a year in funding, up to three years, to help scale the tool, which is free to investigators.

“When we’re talking about a trafficked child, who is being abused and raped daily, reducing the time it takes to locate them is just crucial.”

TellFinder is also used by NCMEC, which received 7,500 tips on its cyber tip line last year related to possible sex trafficking. The A.I. looks for kids in sex ads, but it also scrapes so-called buyer review boards and hobby boards — essentially the Yelp of the commercial sex trade where men rate in detail the girls they’ve bought. TellFinder is trained to pick up on certain keywords in the reviews that can indicate coercion, like “teen” or “she has bruises” or “heroin.” (Getting a girl hooked is a common tactic pimps use to keep her dependent on him for drugs.) “We have seen reviews for missing kids that say something to the effect of, ‘She was really young, she seemed scared.’ And then go on to describe the rape and exploitation the child suffered,” says Staca Shehan, executive director of NCMEC’s case analysis division. “And then go on to review [their experiences with the girl].”

Shehan, who has been with the organization for nearly 20 years, stays positive by focusing on the successes. And she believes that over the last five years TellFinder — along with Spotlight and Traffic Jam, which NCMEC also uses — has made a difference. “I can’t give a metric,” she says, “but we can now find ads where the child is being sold right now and alert law enforcement. When we’re talking about a trafficked child, who is being abused and raped daily, reducing the time it takes to locate them is just crucial.”

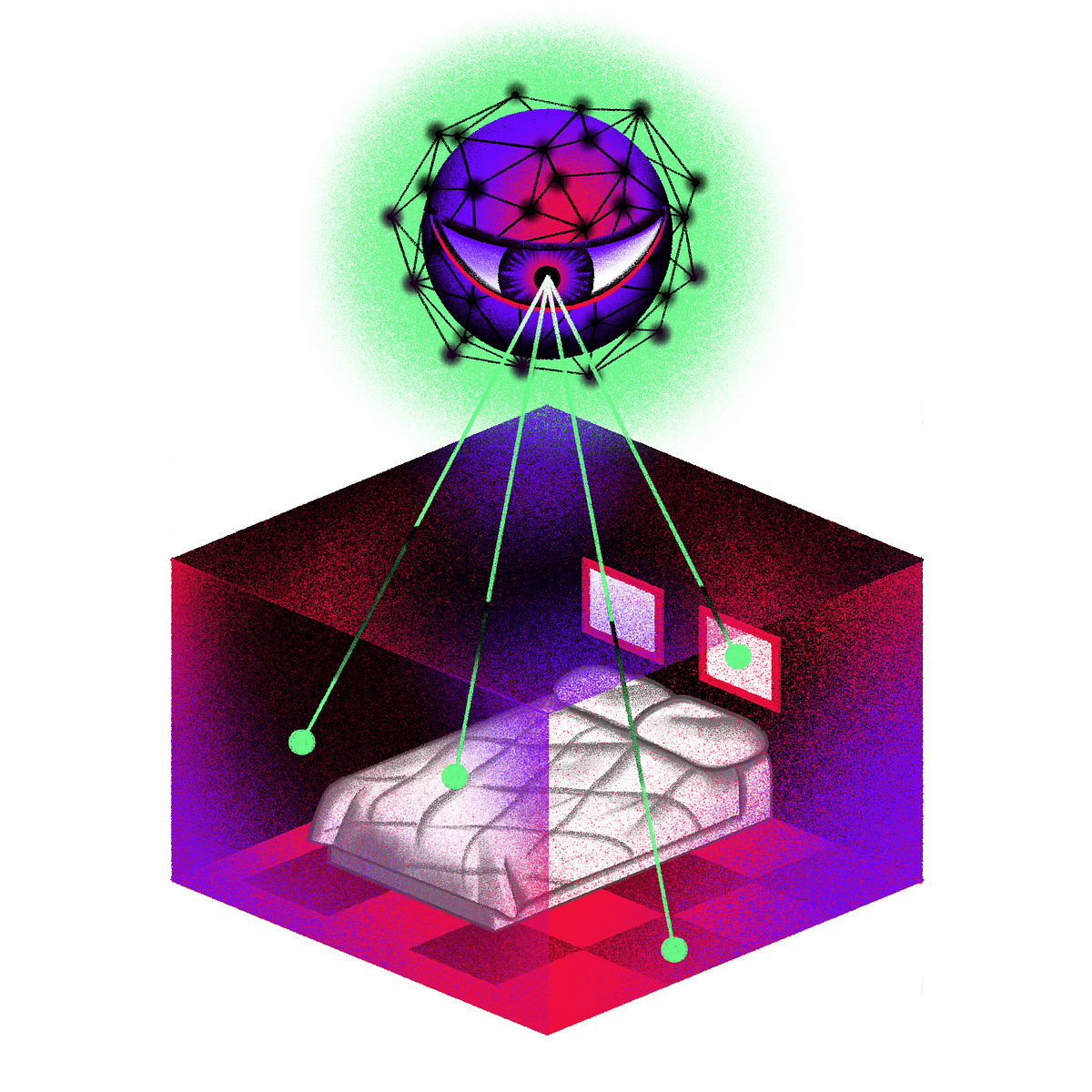

The next generation of trafficking tools takes mining images for clues to the next level. NCMEC is already using one of them, called TraffickCam. Because sex ads are often shot in the hotel and motel rooms where victims are forced to work, TraffickCam is designed to analyze the carpets, furniture, and room accessories in an image’s background to narrow down which hotel the subject has been photographed in. The project took root when colleagues Molly Hackett, Jane Quinn, and Kimberly Ritter, professional event planners in St. Louis who knew the city’s hotels well, started helping local police identify the rooms in photos. They soon realized technology could scale what they were doing and launched Exchange Initiative to make it happen. TraffickCam followed a few years later.

Built by researchers at Washington University in St. Louis, TraffickCam can detect the subtle differences in decor between chain hotel locations, a skill that will be improved with a new $1 million grant from the Department of Justice. (Anyone can help by expanding the platform’s database; download the app and just snap photos every time you arrive at your hotel.)

Another new tool with eagle-eye detection is XIX, which is able to recognize people in blurry photos via mirrors and at different ages. “You can say, ‘Go find this person in an image off a security camera or a side shot of someone off a Facebook page,” says McKinley of DeliverFund.“With other tools we’re often not successful, but with XIX we’re getting 98 to 99% hit rates.”

Even more promising, XIX can also tag objects. Users can ask it to find all photos of a particular girl with a particular guy, or a black pickup truck with a specific license plate, or even words on a T-shirt. “We can go on Instagram and search for photos of cash fanned out on the bed in the background — a pretty strong signal — and even sometimes extract the serial numbers on the bills,” says Emil Mikhailov, founder of the San Francisco-based company XIX.ai that makes the tool.

Another new A.I. innovation — developed by Robert Beiser, executive director of the anti-trafficking nonprofit Seattle Against Slavery — takes aims at the buyers themselves. The tool works like this: A would-be john texts a girl in an ad, and she responds. They go back and forth about prices, services, fetishes, meeting spot. But as soon as the buyer has made clear he’s willing to pay for sex, he gets a message to the effect of: This could have been a trafficking victim. Law Enforcement now has your information and might follow up with you. Click here for counseling so you can stop doing this.

The girl, it turns out, is a bot.

The program is available to police departments and local governments who customize their own message for johns, choosing among the bot’s various personas — teenage, adult, inexperienced. To operate the program, users place a decoy sex ad, and whenever a potential buyer responds, the bot (who remains nameless and never reveals its identity) starts chatting. All the while the prospective customer’s data — including age and address — flows in to investigators, who can decide whether or not to take action.

The bot was trained with the help of Microsoft engineers who used transcripts of court cases like the one in Lehigh Valley, where cops were pulling a sting. “With the A.I., the bot learned what the conversational flow was,” says Beiser. He then brought in sex trafficking survivors to nail down the language.

Beiser says that since launching in 2017, the bot has been adopted by more than 10 police departments, and has already disrupted 18,000 transactions. Although subtler than handcuffs and prison time, the technology confronts one of the most stubborn challenges of bringing down sex trafficking: “the very ingrained fantasy in this culture about commercial sex in that everybody is a willing participant,” says Jennifer Long, founder of AEquitas, a nonprofit that works to improve the prosecution of human trafficking. Bradley Myles, head of Polaris, the NGO that runs the National Human Trafficking Hotline, agrees. “Even if men aren’t getting arrested, it’s piercing the anonymity of buying sex,” he says. “It’s a shock to the system.”

Timea Nagy wishes these tools had been available when she was trafficked. As a 20-year-old in Budapest, she answered an ad promising quick money in Canada. She thought she’d be working as a nanny, or at worst cleaning houses. Instead she wound up captive in a Toronto motel, forced into sex work and erotic dancing. Nagy only escaped by finding a couple of people in the club and pointing to words like “scared” and “help” in her Hungarian-English dictionary.

Timea Nagy wishes these tools had been available when she was trafficked. As a 20-year-old in Budapest, she answered an ad promising quick money in Canada. She thought she’d be working as a nanny, or at worst cleaning houses. Instead she wound up captive in a Toronto motel, forced into sex work and erotic dancing. Nagy only escaped by finding a couple of people in the club and pointing to words like “scared” and “help” in her Hungarian-English dictionary.

Two of her perpetrators were never caught and now, 21 years later, she still has nightmares about running from them. By day she fights back, however, and her many years of activism include advising Traffic Jam and now working with DeliverFund. Still, Nagy offers a cautionary reminder to those who hype the power of A.I.: technology is only as good as the people who use it. “I think artificial intelligence is fantastic,” she adds. “The new software is fantastic. But if it gets into the wrong hands, it’s worth nothing — or worse, it does more damage. And again, who’s going to be left behind? The victims.”

Research estimated 117 million American adults are in facial recognition networks used by law enforcement, and that African Americans were most likely to be singled out.

The same facial recognition and machine learning algorithms that help track down sex traffickers can also trawl through the oceans of our own personal data to draw conclusions about our moods, politics, sexual preferences, addictions, and other behaviors we’d rather not share — assuming they even get us right, which many algorithms fail to do. If A.I. tools are built off biased data sets, they’ll be biased in turn. Civil rights advocates have raised serious concerns about the use of facial recognition algorithms in identifying criminal suspects. This is especially true for minorities — research by Georgetown Law School estimated 117 million American adults are in facial recognition networks used by law enforcement, and that African Americans were most likely to be singled out.

Some trafficking advocates simply bristle at bringing in machine learning to solve one of the most deeply human and emotionally intricate of crimes. They also feel that the Traffic Jams and TellFinders are missing the mark. “These tools are mostly a waste of very scarce resources,” says Jessica Hubley, a telling remark coming from the co-founder of a nonprofit, AnnieCannons, whose mission is training trafficking survivors in tech skills.

Hubley argues that providing a solid path to legal employment changes the calculus more than busting traffickers, which too often leaves victims out on the streets vulnerable to the next predator. AnnieCannons graduates not only get good jobs, but they are also designing tech solutions they’d like to see, including a mobile app to help with restraining orders and a platform to crowdsource anonymous sexual assault reports.

Still, as A.I. tools continue to evolve, they’re expanding beyond bots and search engines. IBM, which has donated nearly $2 million in resources toward the fight against human trafficking, is launching an effort to bring together major banks with other players to pursue the illegal gains from prostitution. Traffickers, after all, need to hide the profits of their crime.

IBM’s new A.I.-powered Traffik Analysis Hub, developed with the U.K. charity Stop The Traffik, is a secure platform for financial institutions, NGOs, and law enforcement to share anonymized data. That means survivors’ narratives, police reports, suspected incidents of fraud or money laundering, as well as news stories that a separate A.I. tool scours the web for each day. The Hub is able to blend and layer all that disparate data, spotting red flags and unusual trends along the way. “Each partner will use the data to further their own efforts to counter trafficking,” explains Sophia Tu, IBM’s director of citizenship and technology.

So, for example, the Hub could provide law enforcement with more leads, help nonprofits see new vulnerable communities, and guide financial institutions to suspicious transactions and account behavior related to money laundering. Once those cases have been looked into, “the information is then passed to law enforcement, who investigate the activity which may result in a prosecution,” says Neil Giles, director of intelligence-led prevention at Stop the Traffik. Western Union, Barclays, and Lloyds have already signed on.

Back in Lehigh County, the DA’s office is building its own A.I. system — a first of its kind — to proactively go after traffickers. With expertise from Long’s prosecutor group, AEquitas, and trafficking researcher Colleen Owens, whose nonprofit is called The Why, the new system will mine law enforcement data that includes close to 8 million individuals and over 4 million police incident records for lesser crimes, in an effort to establish patterns that could start or speed human trafficking investigations.

For example, a police report of a man busted for selling drugs might mention three young female bystanders in the room. The system will flag it and check to see if those women have shown up in other reports for prostitution, or as victims of rape or assault, or with “ingredients in the cocktail traffickers give victims to incapacitate them,” says Owens. Put those pieces together, and investigators can start to draw a picture of of trafficking.

“As new reports flow in every day, our system will alert an analyst and say, ‘I know you were looking into this kind of thing; something happened last night that connects these five things over here, with these other five things over there, and we think you should look at it further,” says Daniel Lopresti, the chair of Lehigh University’s department of computer science and engineering who will help develop the A.I. tool. Plans include a new facility to help the survivors the system uncovers.

All of that could have prevented the moment Boswell’s victim walked into the Staybridge Suites — and perhaps even gotten her help before she met him. “I grew up in an alcoholic home with a father who was sexually abusive to me,” the woman, who declined to be interviewed, told the jury, and since then, all she knew was “drugs, the lifestyle on the streets, money.” But after Boswell’s arrest, she had gotten sober and turned herself in to serve time for drug crimes she was convicted of after the sting. She was brought to the trial from a prison in New Jersey, where she had been sentenced to four years.

“Through whatever path that I’ve had in my lifetime I’ve always asked God, ‘Why these things?’” she told the courtroom. “There is nothing a human being can do in this world to deserve these things. And if it just meant there is one person that I can help by this, I feel free.”